LLaVA: Visual Instruction Tuning

Contents

NeurIPS 2023 (Oral) Microsoft Research arXiv 2304.08485 haotian-liu/LLaVA llava-vl.github.io

Motivation

Contribution

Method

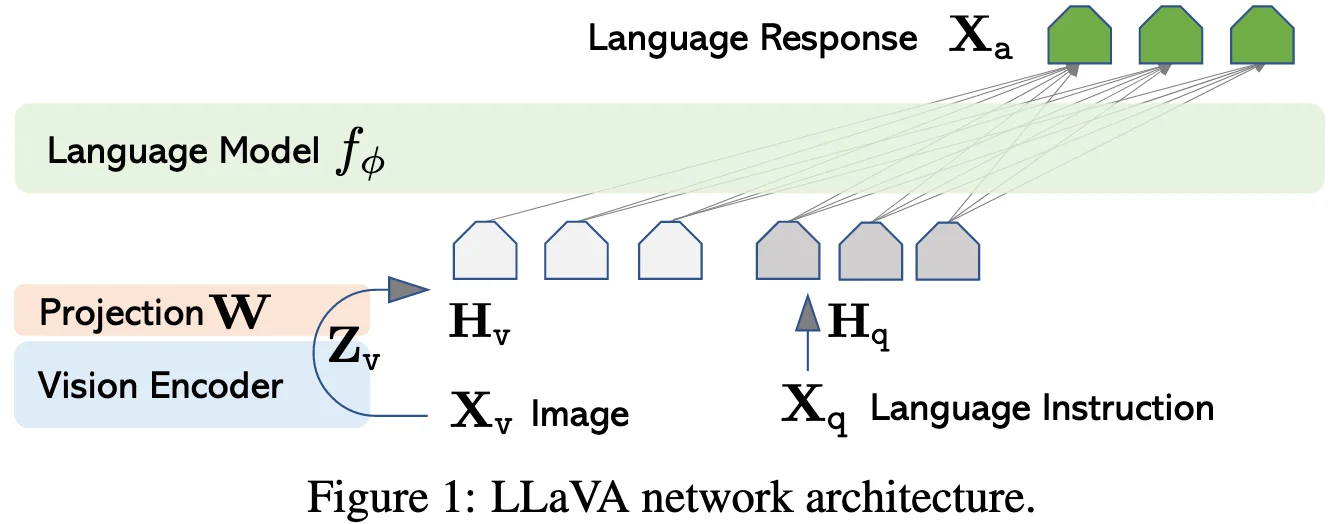

Architecture

Large Language Model: Vicuna

Vision Encoder: the pre-trained CLIP visual encoder ViT-L/14

Adapter: While a simple linear layer is employed here, more sophisticated alternatives, such as gated cross-attention in Flamingo and Q-former in BLIP-2, could be optionally substitued.

- cross-modal alignment between visual space and text space.

- visual feature compression

Training Recipe

Pre-training for Feature Alignment:

Fine-tuning End-to-End:

Data Recipe

GPT-assisted Visual Instruction Data Generation

Experiment

Reference

Question

NIPS 2023, Oral - ReadPaper

Motivations

Contributions

Method [图片] Task Data: GPT-assisted Visual Instruction Data Generation Multi-modal data

- Image-text pairs: CC LAION

Input/Output Multi-Modal Tokenizer Image Tokenizer

- Encoder: the pre-trained CLIP visual encoder ViT-L/14

- Projector: connect image features into the word embedding space.

- (this paper) a simple linear layer

- Gated cross-attention in Flamingo

- Q-Former in BLIP-2 Text Tokenizer LLM Decoder (Vicuna) Training Pre-training for Feature Alignment Fine-tuning End-to-End