HunyuanOCR Technical Report

Tencent Hunyuan Vision Team

arXiv 2511.19575

Tencent-Hunyuan/HunyuanOCR

tencent/HunyuanOCR

Motivation

- Traditional OCR systems rely on the modularized pipeline architecture, primarily including, but not limited to: text detection, text recognition, document layout analysis, named entity recognition, and optional text translation modules, which inevitably result in cumulative error propagation, elevate deployment and maintenance overhead. -> End-to-End

- While leading general VLMs (e.g., Gemini, Qwen-VL) deliver superior OCR performance, they often entail excessive computational overhead and high latency due to the massive parameter scales. -> OCR-specific, lightweight(1B)

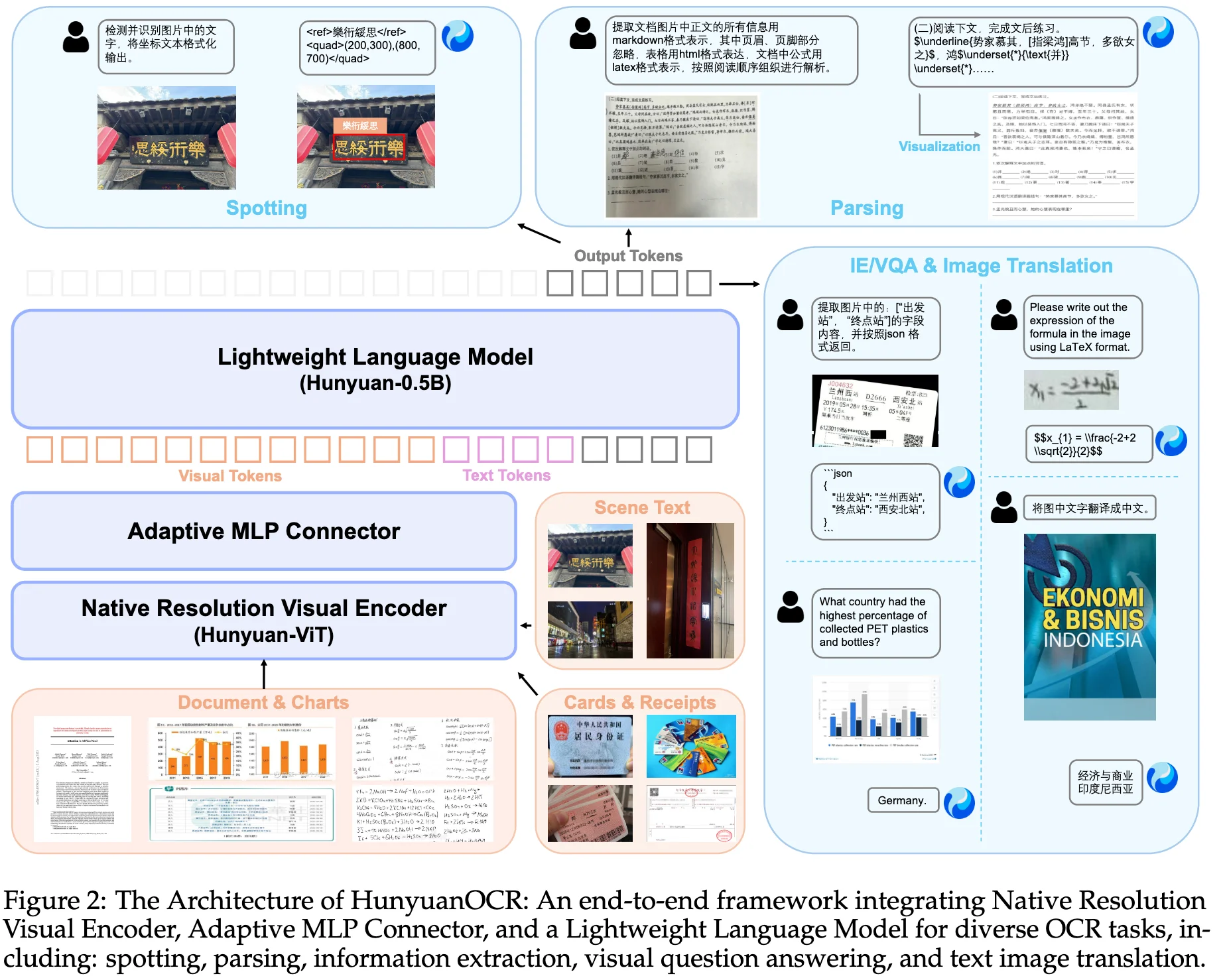

- unified multi-task modeling, including text spotting, document parsing, information extraction, visual question answering, and text image translation.

Method

Model Architecture

Large Language Model (0.5B): HunyuanOCR model is initialized with pre-trained weights from Hunyuan-0.5B with xD-RoPE.

Native ResolutionVision Encoder (0.4B): SigLIP-v2-400M pre-trained model.

Vision-Language Adapter:

| |

End-to-End Optimization

Data Recipe

Data Collection

- public benchmarks

- web crawling

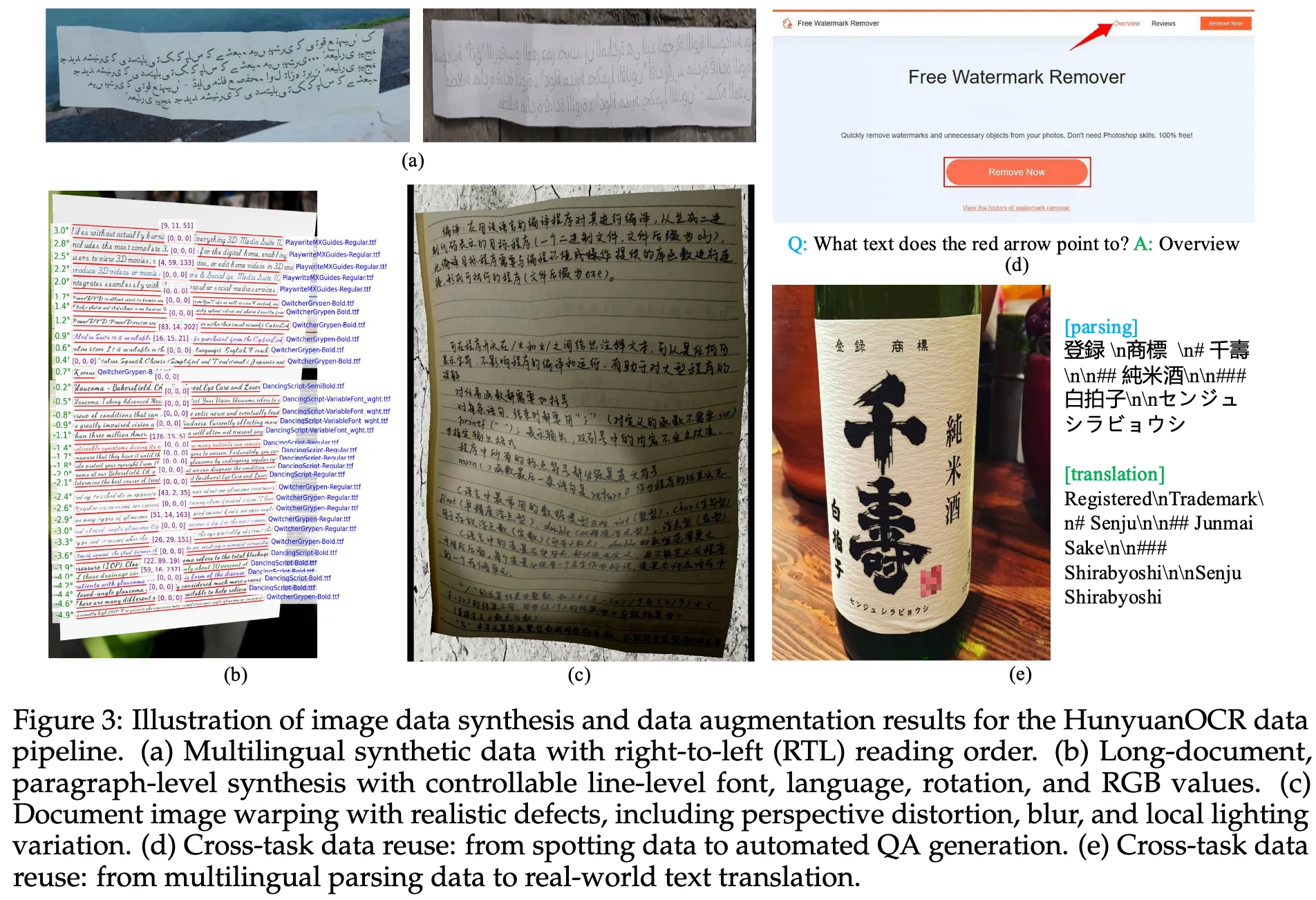

- synthetic data

200 million image-text pairs spanning nine major real-world scenarios—street views, documents, advertisements, handwritten text, screenshots, cards/certificates/invoices, game interfaces, video frames, and artistic typography—and covering more than 130 languages.

Data Synthesis

Data Augmentation

- geometric deformation via control-point manipulation to emulate folds, curves, and perspective distortions.

- imaging degradation with motion blur, Gaussian noise, and compression artifacts.

- illumination perturbations that model global/local lighting variations, shadows and reflections.

Training Recipe

| Stages | Pre-training | Reinforcement Learning | |||

|---|---|---|---|---|---|

| Stage-1 | Stage-2 | Stage-3 | Stage-4 | ||

| Purpose | Vision-Language Alignment | Multimodal Pre-training | Long-context Pre-training | Application-oriented SFT | - |

| Trainable Params | ViT & Adapter | All | All | All | - |

| Learning Rate | 3e-4 → 3e-5 | 2e-4 → 5e-5 | 8e-5 → 5e-6 | 2e-5 → 1e-6 | - |

| Training Tokens | 50B | 300B | 80B | 24B | - |

| Sequence Length | 8k | 8k | 32k | 32k | - |

| Data Composition |

|

|

|

| - |

a small proportion of plain text to preserve the core linguistic capabilities of the language model.

Supplementary Material

Evolution of Optical Character Recognition (OCR)

- 1950s-1980s: OCR systems were based on template matching and feature engineering, focusing on basic text recognition in scanned documents.

- 1990s: machine learning

- 2015s: deep learning

- 2020s: vision-language models

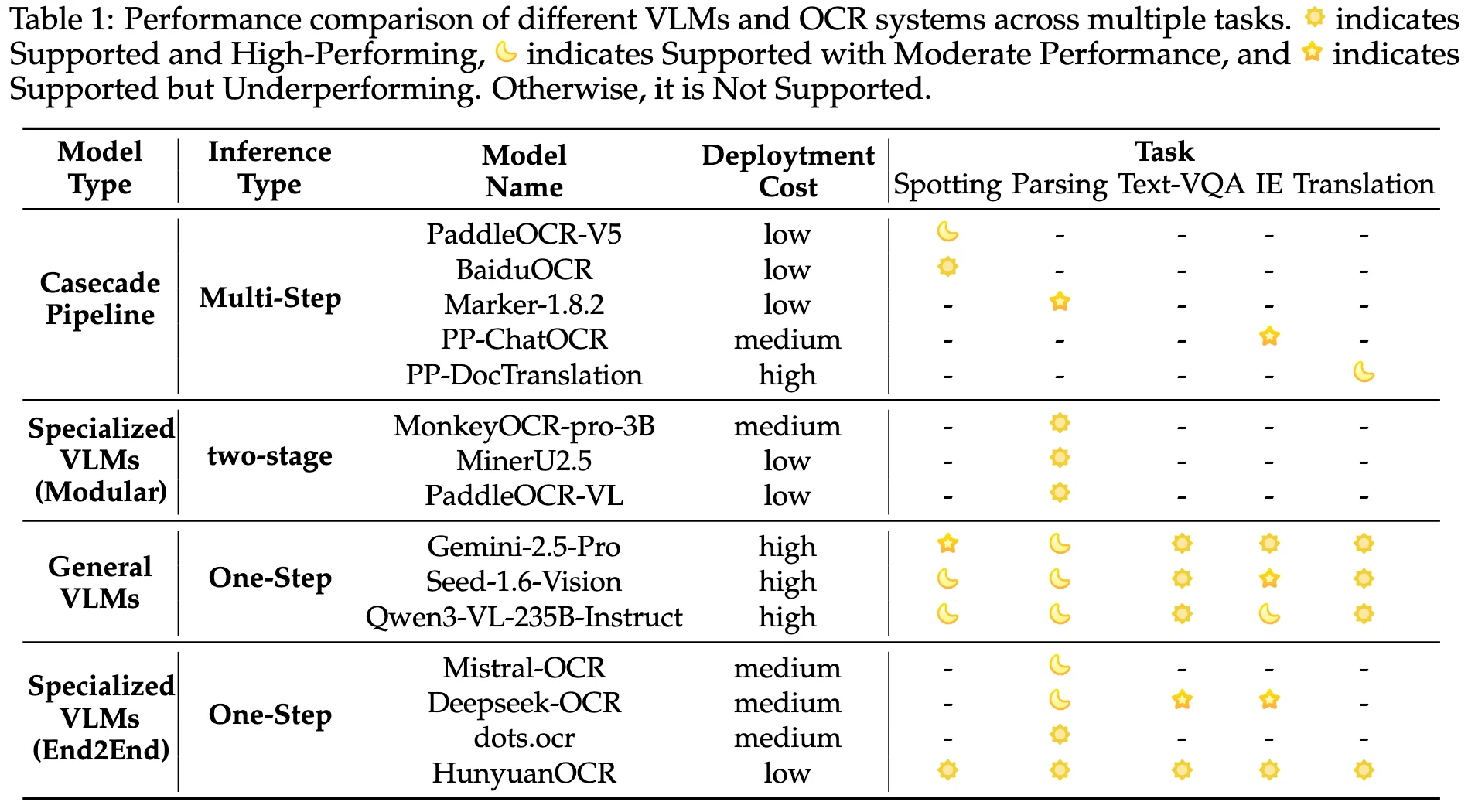

- General VLMs

- Specialized VLMs (Modular): still depend on a preliminary layout analysis module to detect document elements, with the VLM subsequently parsing content within localized regions.

- Specialized OCR Models (End2End)

Performance Comparison of OCR Systems

OCR Tasks

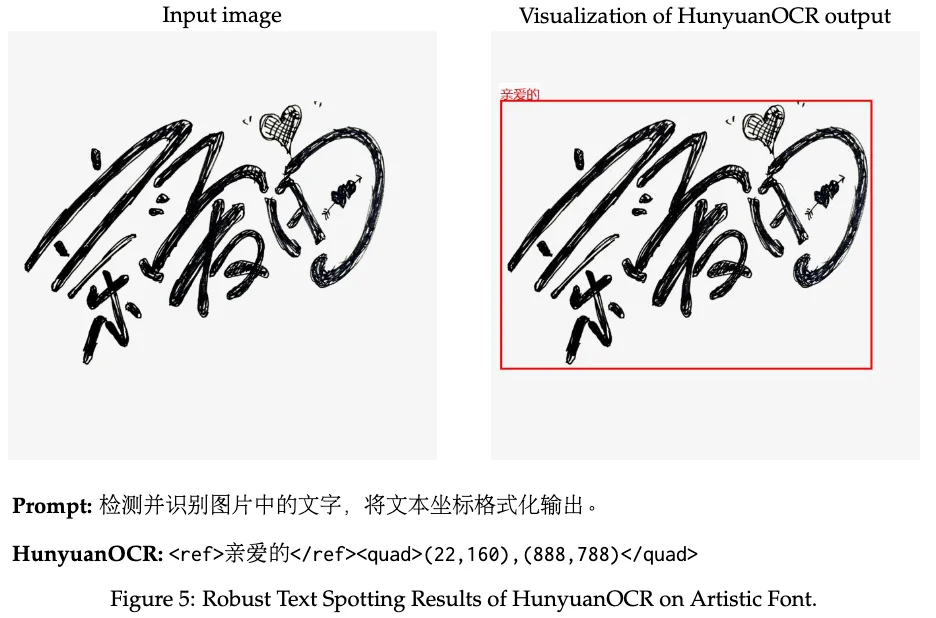

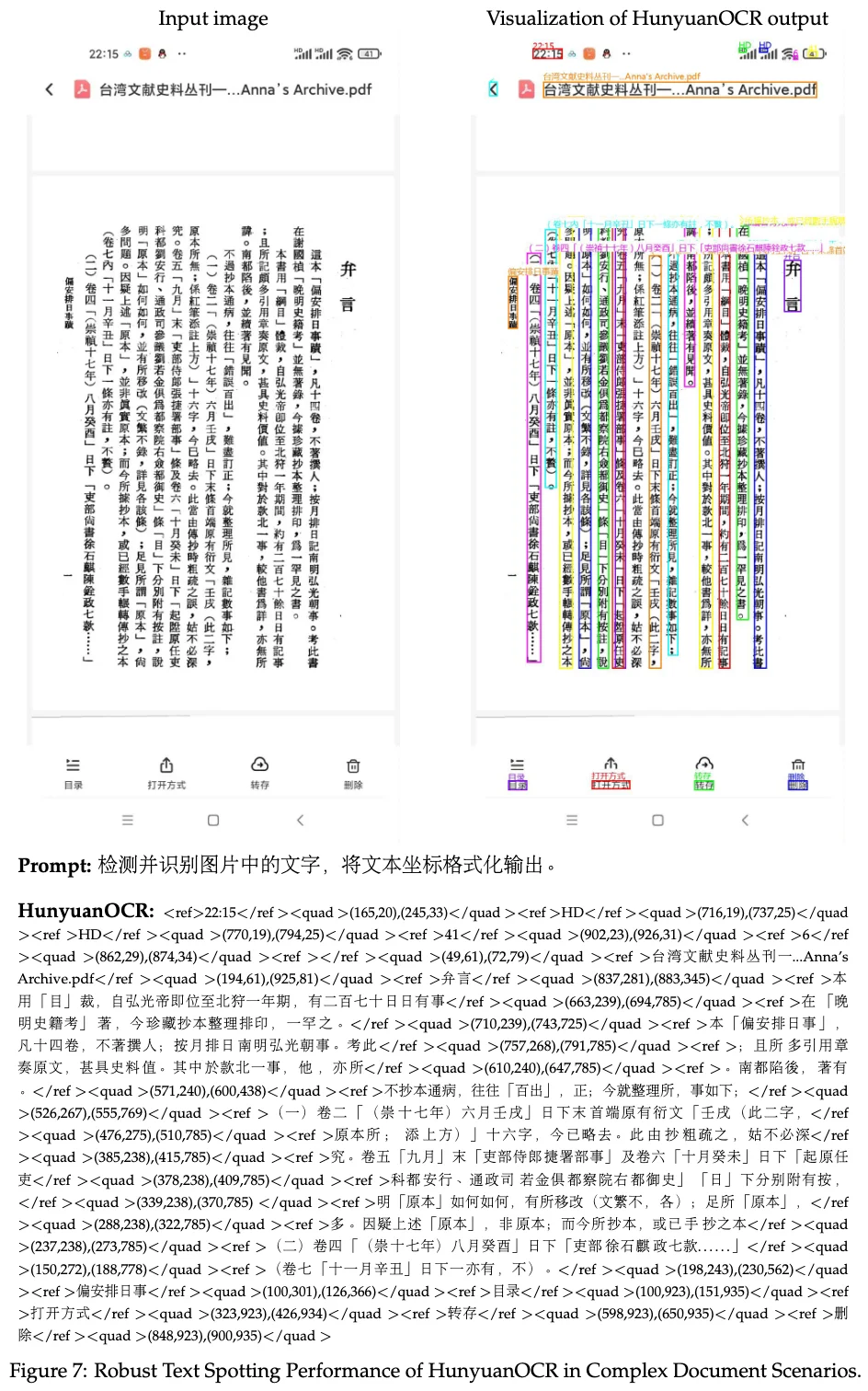

Text Spotting

detect and recognize text within an image, and output the line-leveltext content and coordinates in a formatted manner. <ref>text</ref><quad>(x1,y1),(x2,y2)</quad>

<ref>text</ref>: text content<quad>(x1,y1),(x2,y2)</quad>: text coordinates with its top-left and bottom-right vertices, normalized to the range [0, 1000] to maintain consistency across input images of varying resolutions.

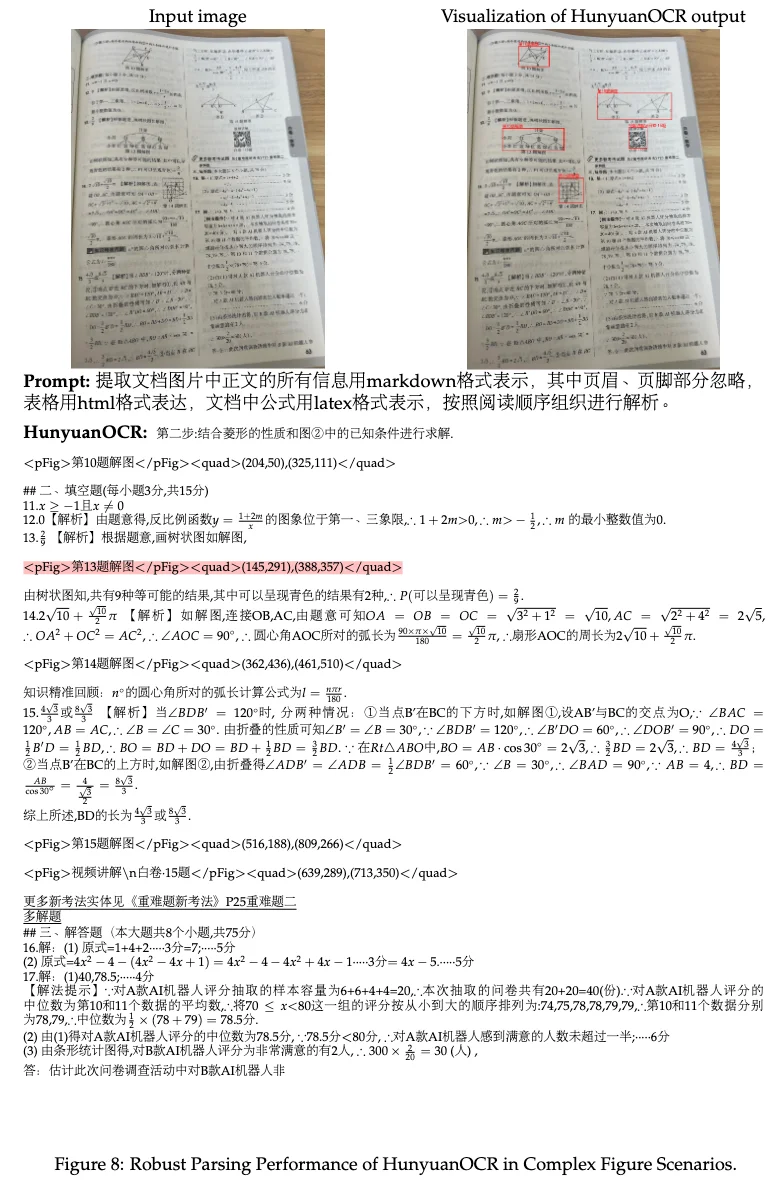

Document Parsing

parse the text into a structured format.

- Fine-Grained Element Parsing: support independent identification and extraction of specialized document elements, including mathematical formulas, chemical formulas, tables, and charts.

- End-to-End Document Parsing

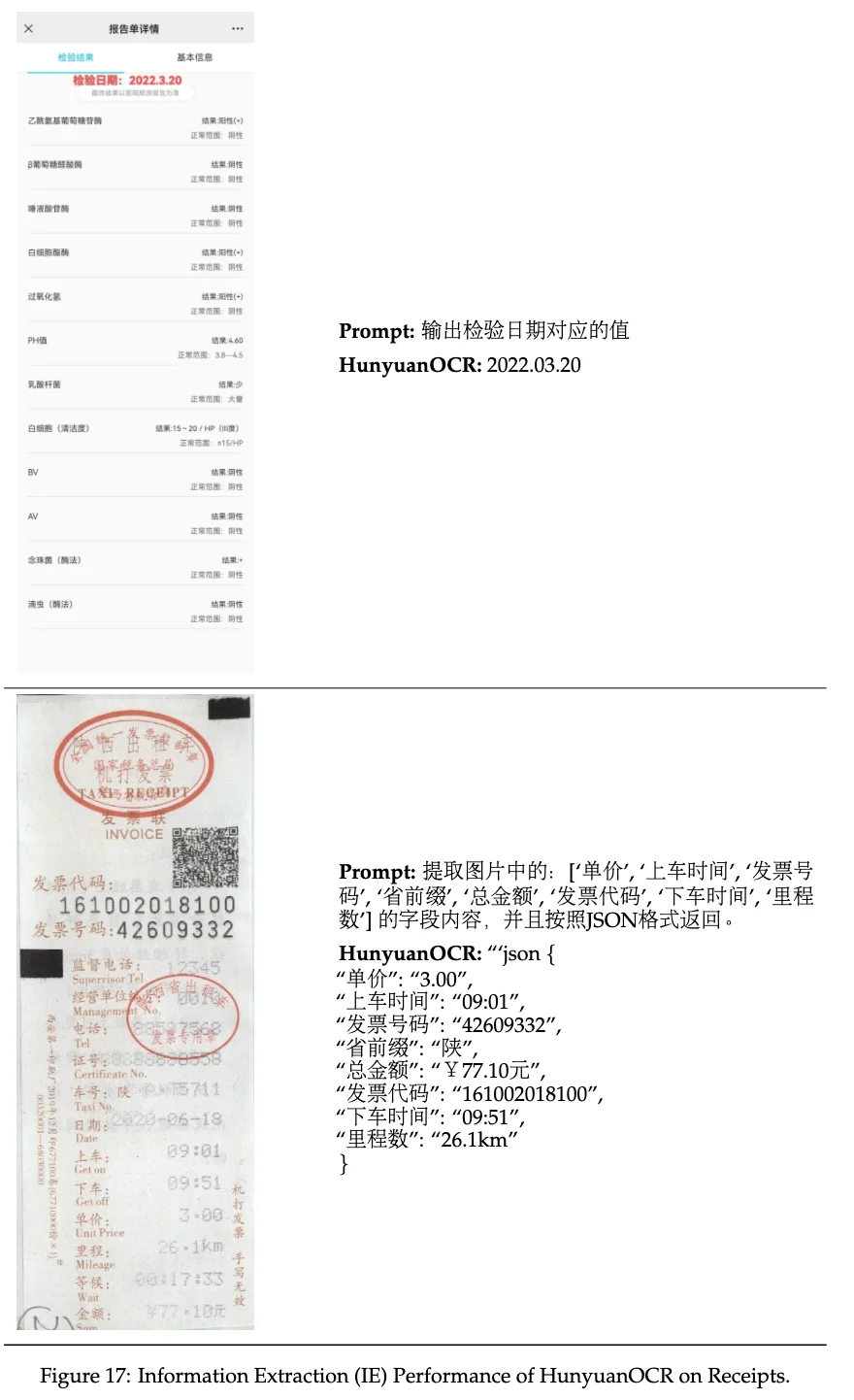

Information Extraction (IE)

extract information from the text.

Visual Question Answering (VQA)

answer questions based on the visual content of a given image.

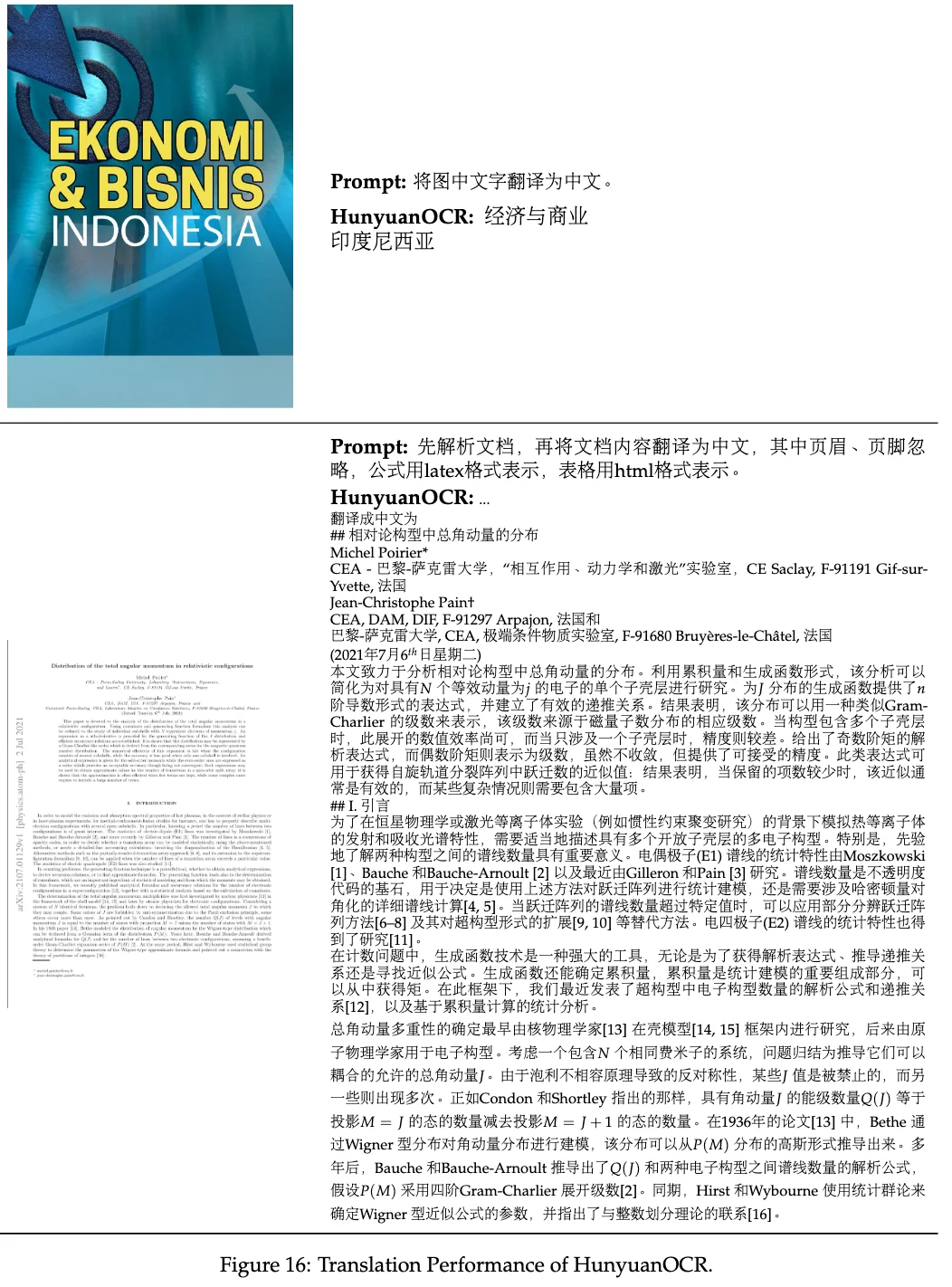

Text Image Translation

translate the text in an image into either Chinese or English.

- support over 14 languages

- support both document-oriented images and general-purpose images

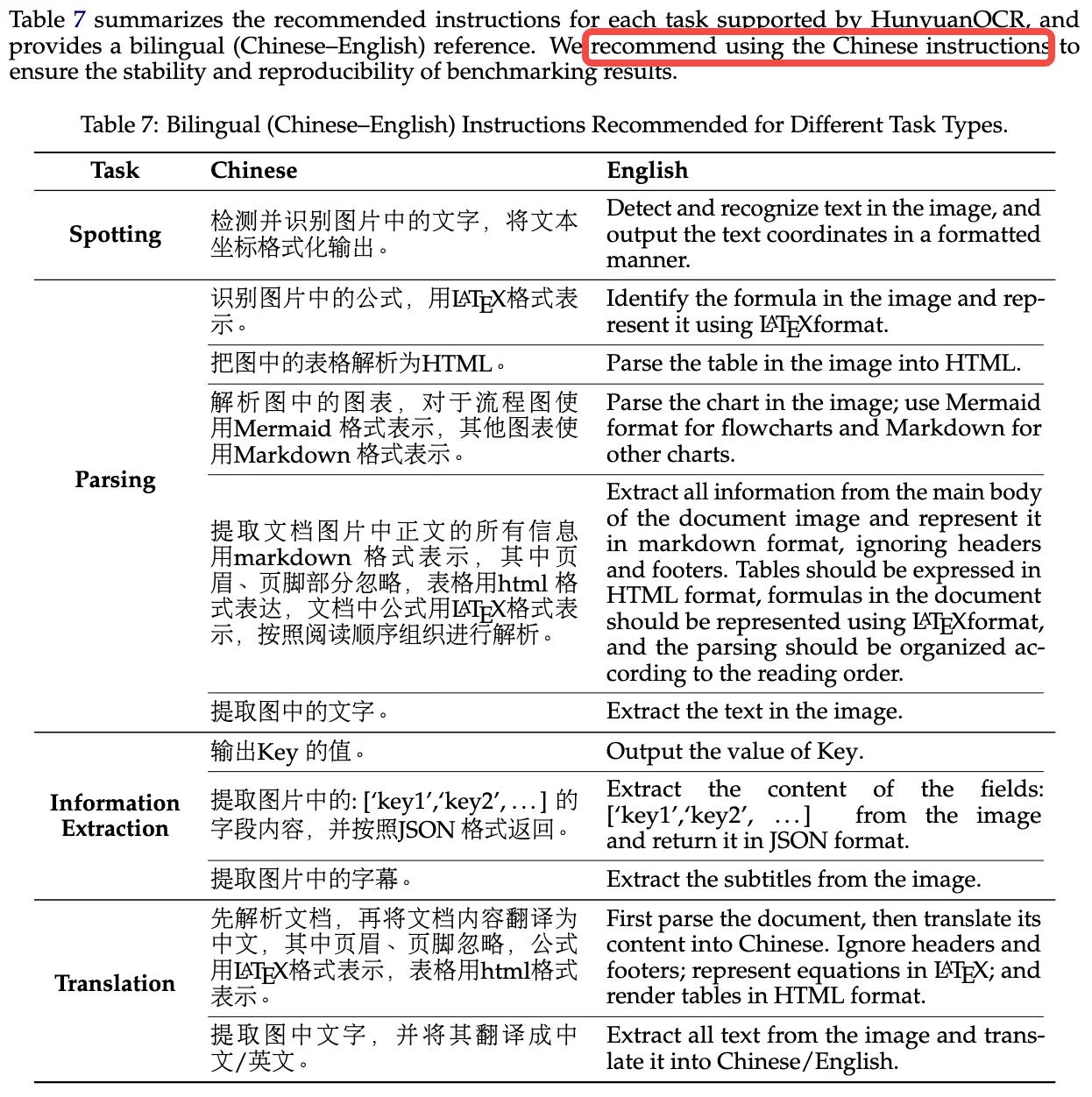

Recommended Instruction

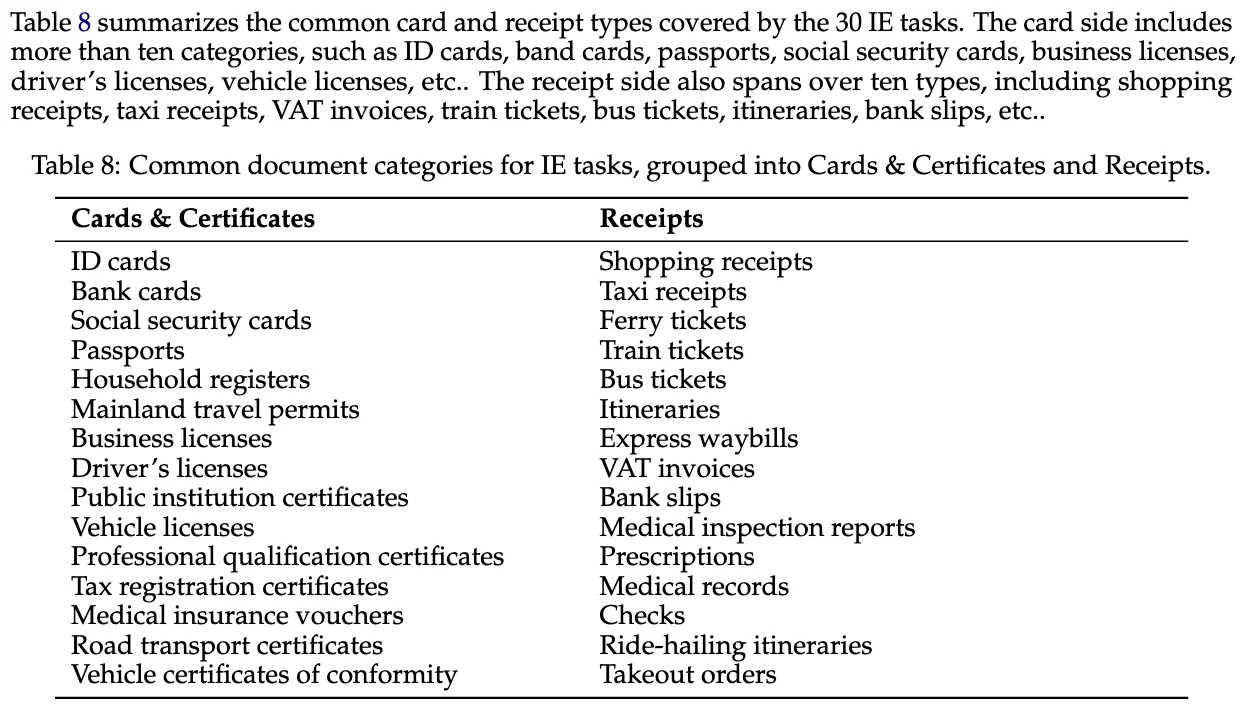

Common Supported IE Categories

Reinforcement Learning Details

Code

| |