GPT-3: Language Models are Few-Shot Learners

Contents

OpenAI arXiv 2005.14165

TL;DR

Motivations & Innovations

Approach

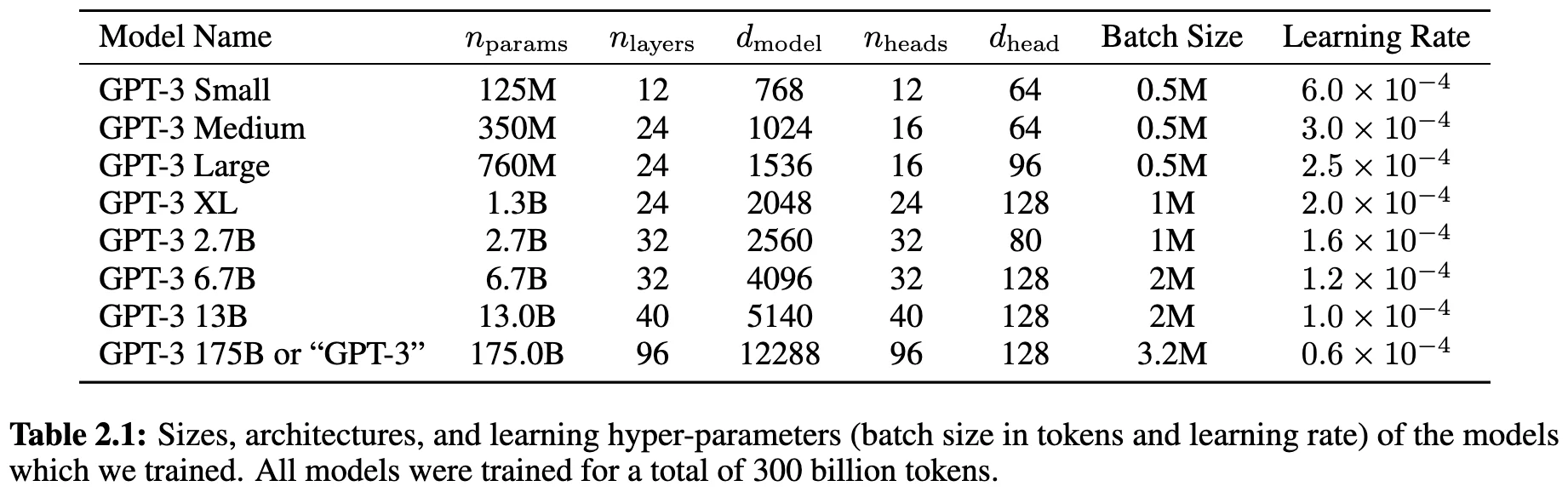

Model

same model and architecture as GPT-2

Training Recipe

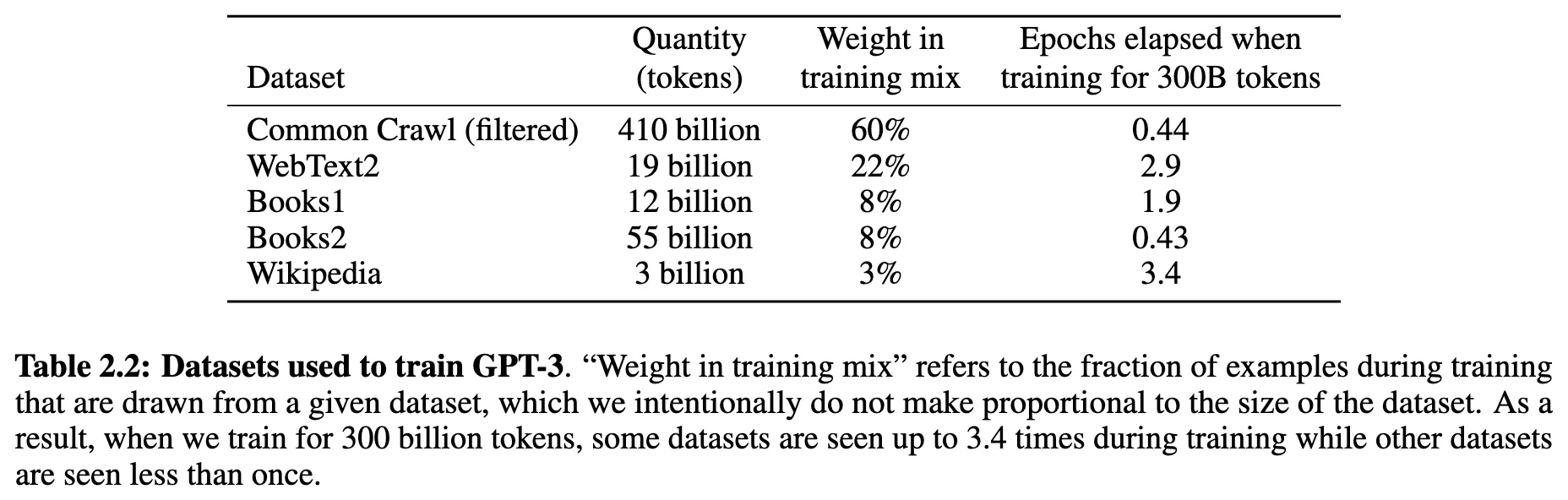

Data Recipe

GPT-3 通过对海量互联网数据(Common Crawl)进行深度清洗和去重,并提高高质量垂直语料(如书籍、维基百科)的采样比例,以“质量优先”的原则构建了 3000 亿 token 的训练集。